---

title: Elastic Inference Service

description: Use Elastic Inference Service (EIS) to run inference for search, embeddings, and chat without deploying models in your environment.

url: https://docs-v3-preview.elastic.dev/elastic/docs-content/pull/6239/explore-analyze/elastic-inference/eis

products:

- Elastic Cloud Enterprise

- Elastic Cloud on Kubernetes

- Elastic Stack

applies_to:

- Elastic Cloud Serverless: Generally available

- Elastic Stack: Generally available

---

# Elastic Inference Service

Elastic Inference Service (EIS) enables you to leverage AI-powered search as a service without deploying a model in your environment.

With EIS, you don't need to manage the infrastructure and resources required for machine learning inference by adding, configuring, and scaling machine learning nodes.

Instead, you can use machine learning models for ingest, search, and chat independently of your Elasticsearch infrastructure.

Elastic Stack: Generally available since 9.3 You can use EIS with your [self-managed](https://docs-v3-preview.elastic.dev/elastic/docs-content/pull/6239/deploy-manage/deploy/self-managed) cluster through Cloud Connect. For details, refer to [EIS for self-managed clusters](https://docs-v3-preview.elastic.dev/elastic/docs-content/pull/6239/explore-analyze/elastic-inference/connect-self-managed-cluster-to-eis).

## AI features powered by EIS

- Your Elastic deployment or project comes with [Elastic Managed LLMs](https://www.elastic.co/docs/reference/kibana/connectors-kibana/elastic-managed-llm) by default. These can be used in Agent Builder, the AI Assistant, Attack Discovery, Automatic Import and Search Playground. For the list of available models, refer to [Supported models](https://docs-v3-preview.elastic.dev/elastic/docs-content/pull/6239/explore-analyze/elastic-inference/eis-supported-models).

- You can use [ELSER](https://docs-v3-preview.elastic.dev/elastic/docs-content/pull/6239/explore-analyze/machine-learning/nlp/ml-nlp-elser) to perform semantic search as a service (ELSER on EIS). Elastic Stack: Generally available since 9.2, Elastic Stack: Preview in 9.1 Elastic Cloud Serverless: Generally available

- You can use the [`jina-embeddings-v3`](/elastic/docs-content/pull/6239/explore-analyze/machine-learning/nlp/ml-nlp-jina#jina-embeddings-v3) multilingual dense vector embedding model to perform semantic search through the Elastic Inference Service. Elastic Stack: Preview since 9.3 Elastic Cloud Serverless: Preview

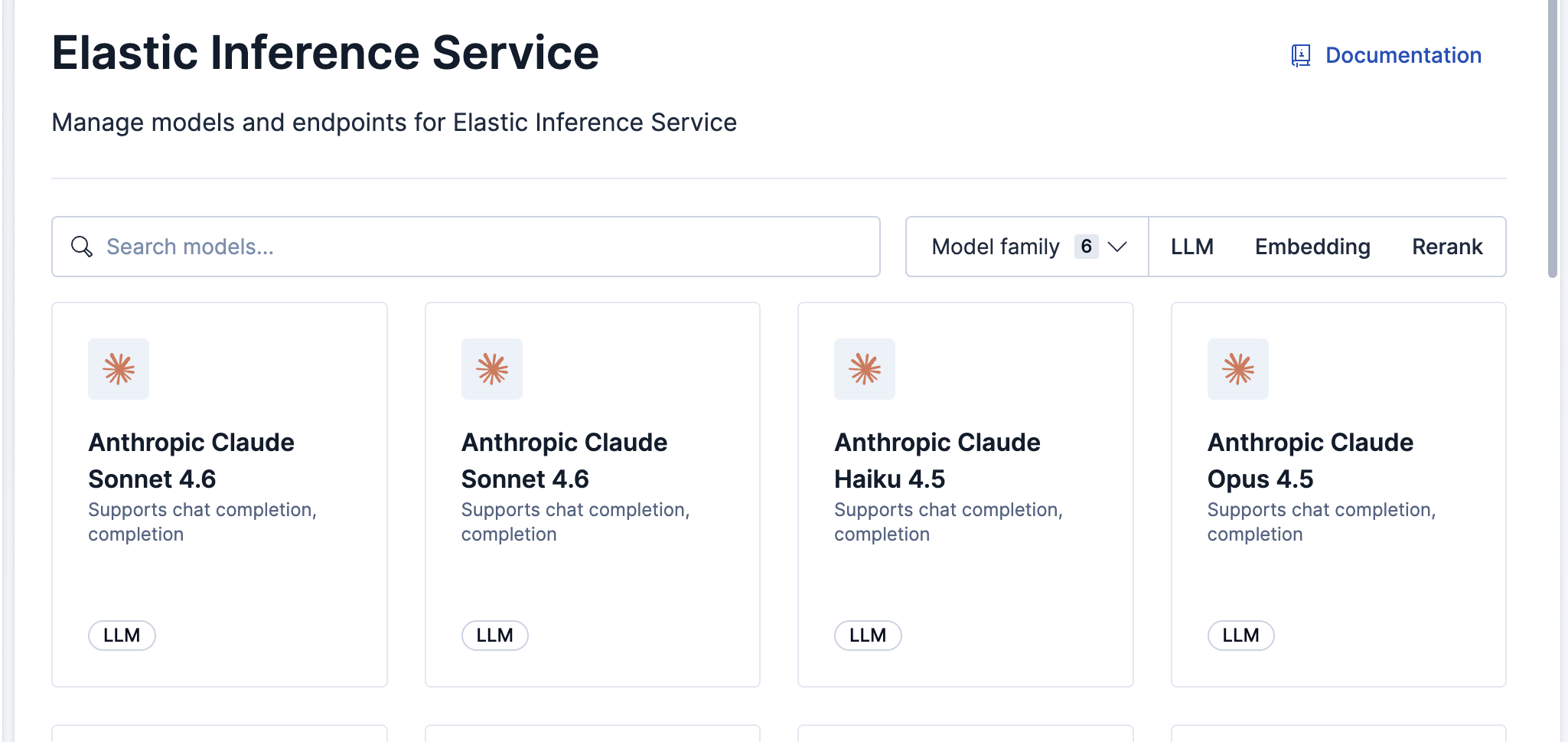

## Manage your models

Kibana provides interfaces for managing EIS models and endpoints.

Go to the **Elastic inference** page by using the navigation menu or the [global search field](https://docs-v3-preview.elastic.dev/elastic/docs-content/pull/6239/explore-analyze/find-and-organize/find-apps-and-objects).

To access **Elastic inference**, you need the `Inference Endpoints: all` and `Advanced Settings: read` Kibana privileges.

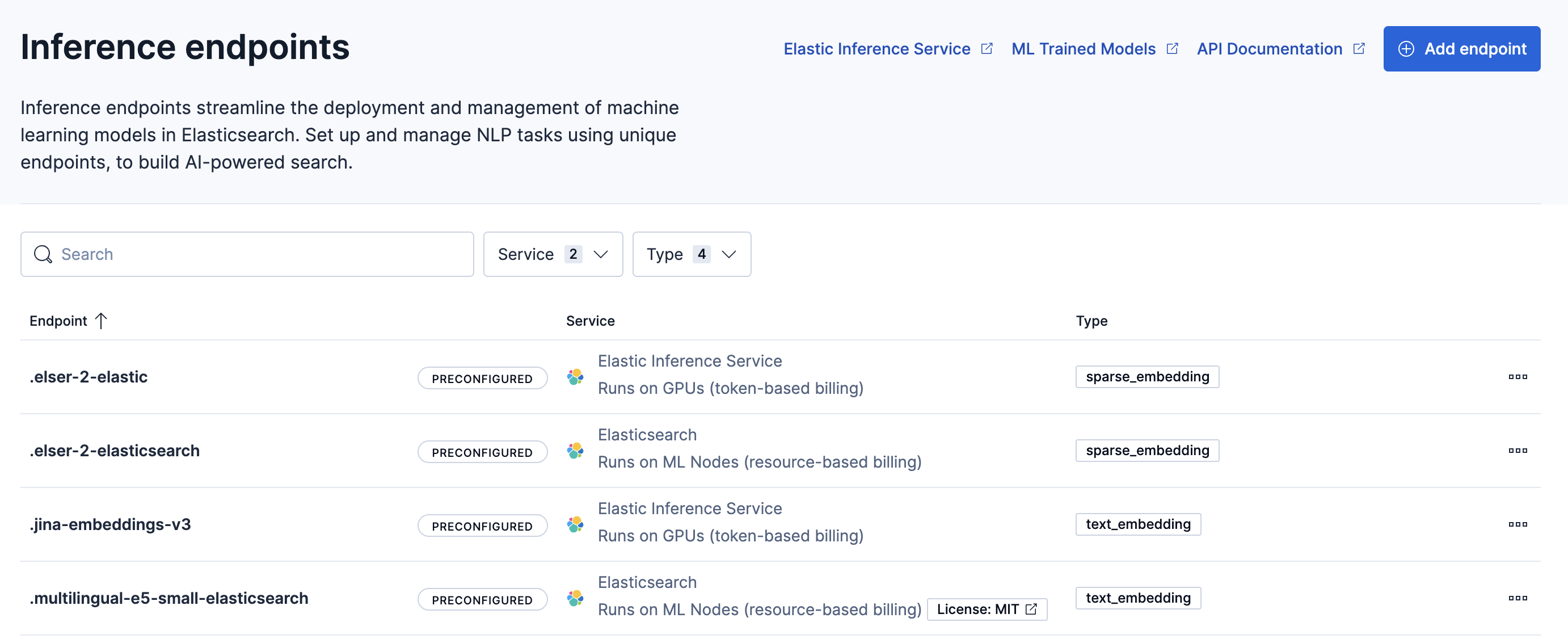

Go to the **Inference endpoints** page by using the navigation menu or the [global search field](https://docs-v3-preview.elastic.dev/elastic/docs-content/pull/6239/explore-analyze/find-and-organize/find-apps-and-objects).

Available actions include:

- Add endpoints

- View endpoint details

- Copy the inference endpoint ID

- Delete endpoints

## Add endpoints

Your deployment includes default inference endpoints which are preconfigured and ready to use.

In most cases, you should use these default endpoints.

However, you can choose to create custom EIS endpoints if you need to instantiate a specific model version or configuration that is not covered by the defaults.

1. Go to the **Elastic inference** page by using the navigation menu or the [global search field](https://docs-v3-preview.elastic.dev/elastic/docs-content/pull/6239/explore-analyze/find-and-organize/find-apps-and-objects).

2. Select the model you want the new endpoint to use.

3. Click **Add endpoint**.

4. Enter a unique **Model ID**. For a complete list of valid Model IDs and their corresponding task types, refer to the [Supported models](https://docs-v3-preview.elastic.dev/elastic/docs-content/pull/6239/explore-analyze/elastic-inference/eis-supported-models).

5. Select **Save**.

1. Go to the **Inference endpoints** page by using the navigation menu or the [global search field](https://docs-v3-preview.elastic.dev/elastic/docs-content/pull/6239/explore-analyze/find-and-organize/find-apps-and-objects).

2. In the **Service** dropdown, select **Elastic Inference Service**.

3. In the **Settings** section, enter the specific **Model ID**. For a complete list of valid Model IDs and their corresponding task types, refer to the [Elastic Inference Service supported models](https://docs-v3-preview.elastic.dev/elastic/docs-content/pull/6239/explore-analyze/elastic-inference/eis-supported-models).

4. (Optional) Under **More options**, set the **Maximum Input Tokens**. This limits the number of tokens processed per request. If left blank, the model's default limit is used.

5. Expand **Additional settings** and select the **Task type** that corresponds to your model.

6. Select **Save**.

Alternatively, you can use [inference APIs](https://www.elastic.co/docs/api/doc/elasticsearch/group/endpoint-inference), as described in the following section.

## Use cases

The following sections describe how to get started with specific models available through Elastic Inference Service, including creating inference endpoints and using them for search and ingest.

### `jina-embeddings-v5-text-small` on EIS

- Elastic Cloud Serverless: Preview

- Elastic Stack: Planned

You can use the `jina-embeddings-v5-text-small` model through Elastic Inference Service. Running the model on EIS means that you use the model on GPUs, without the need of managing infrastructure and model resources.

#### Get started with `jina-embeddings-v5-text-small` on EIS

Create an inference endpoint that references the `jina-embeddings-v5-text-small` model in the `model_id` field.

```json

{

"service": "elastic",

"service_settings": {

"model_id": "jina-embeddings-v5-text-small"

}

}

```

The created inference endpoint uses the model for inference operations on the Elastic Inference Service. You can reference the `inference_id` of the endpoint in index mappings for the [`semantic_text`](https://docs-v3-preview.elastic.dev/elastic/elasticsearch/tree/main/reference/elasticsearch/mapping-reference/semantic-text) field type, text_embedding inference tasks, or search queries.

### `jina-embeddings-v3` on EIS

- Elastic Cloud Serverless: Preview

- Elastic Stack: Preview since 9.3

You can use the `jina-embeddings-v3` model through Elastic Inference Service. Running the model on EIS means that you use the model on GPUs, without the need of managing infrastructure and model resources.

#### Get started with `jina-embeddings-v3` on EIS

Create an inference endpoint that references the `jina-embeddings-v3` model in the `model_id` field.

```json

{

"service": "elastic",

"service_settings": {

"model_id": "jina-embeddings-v3"

}

}

```

The created inference endpoint uses the model for inference operations on the Elastic Inference Service. You can reference the `inference_id` of the endpoint in index mappings for the [`semantic_text`](https://docs-v3-preview.elastic.dev/elastic/elasticsearch/tree/main/reference/elasticsearch/mapping-reference/semantic-text) field type, text_embedding inference tasks, or search queries.

### ELSER through Elastic Inference Service (ELSER on EIS)

- Elastic Cloud Serverless: Generally available

- Elastic Stack: Generally available since 9.2

- Elastic Stack: Preview in 9.1

ELSER on EIS enables you to use the ELSER model on GPUs, without having to manage your own ML nodes. We expect better performance for ingest throughput than ML nodes and equivalent performance for search latency. We will continue to benchmark, remove limitations and address concerns.

#### Using the ELSER on EIS endpoint

You can now use `semantic_text` with the new ELSER endpoint on EIS. To learn how to use the `.elser-2-elastic` inference endpoint, refer to [Using ELSER on EIS](https://docs-v3-preview.elastic.dev/elastic/elasticsearch/tree/main/reference/elasticsearch/mapping-reference/semantic-text-setup-configuration).

##### Get started with semantic search with ELSER on EIS

[Semantic Search with `semantic_text`](https://docs-v3-preview.elastic.dev/elastic/docs-content/pull/6239/solutions/search/semantic-search/semantic-search-semantic-text) has a detailed tutorial on using the `semantic_text` field and using the ELSER endpoint on EIS instead of the default endpoint. This is a great way to get started and try the new endpoint.