Tutorial 1: Install a self-managed Elastic Stack

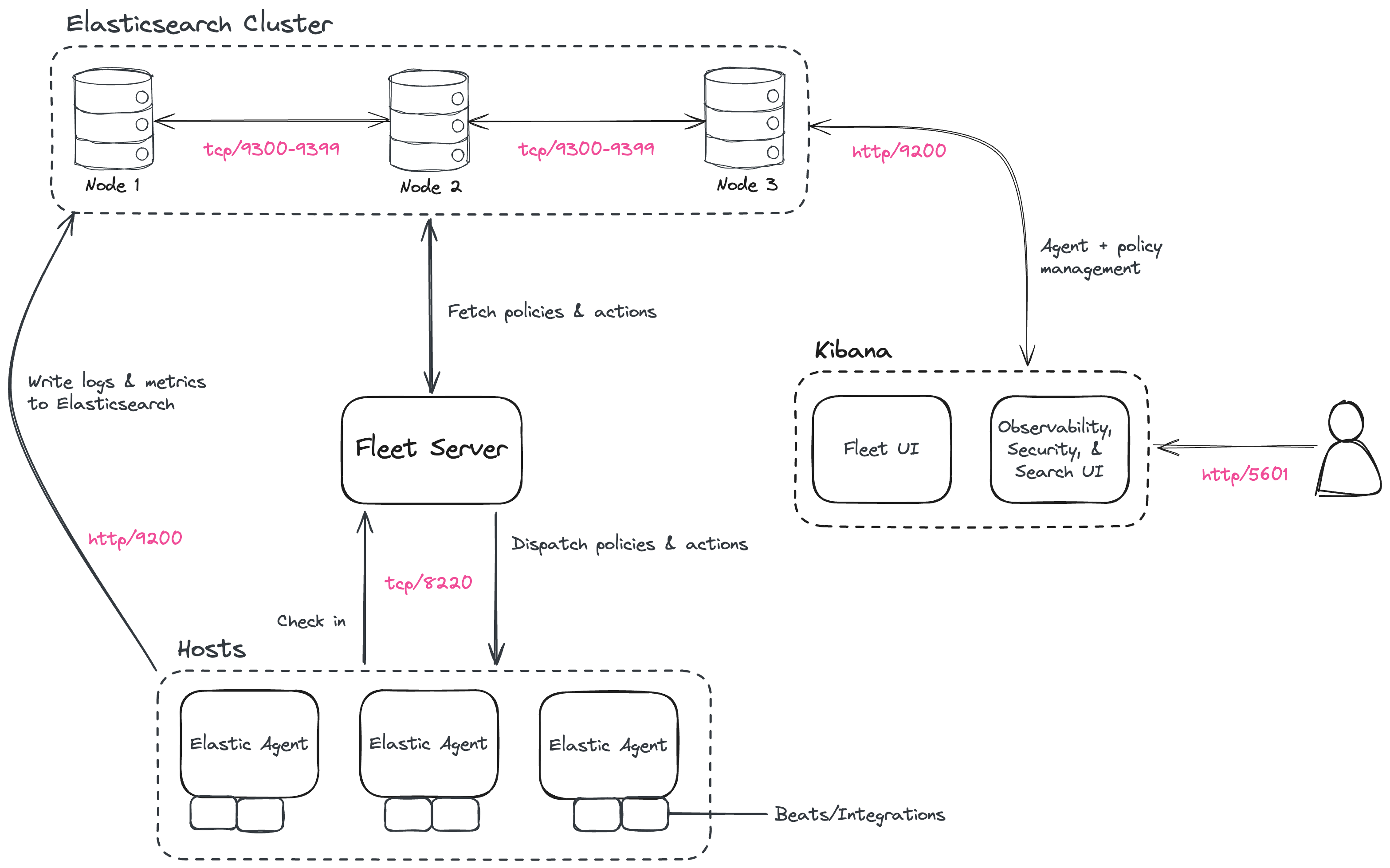

This tutorial demonstrates how to install and configure the latest 9.3.2 version of the Elastic Stack in a self-managed environment. Following these steps sets up a three node Elasticsearch cluster, with Kibana, Fleet Server, and Elastic Agent, each on separate hosts. The Elastic Agent is configured with the System integration, enabling it to gather local system logs and metrics and deliver them into the Elasticsearch cluster. Finally, the tutorial shows how to view the system data in Kibana.

This installation flow relies on the Elasticsearch automatic security setup, which secures Elasticsearch by default during the initial installation.

If you plan to use custom certificates (for example, corporate-provided or publicly trusted certificates), or if you need to configure HTTPS for browser-to-Kibana communication, you can follow this tutorial in combination with Tutorial 2: Customize certificates for a self-managed Elastic Stack at the appropriate stage of the installation.

For more details, refer to Security overview.

It should take between one and two hours to complete these steps.

- Prerequisites and assumptions

- Elastic Stack overview

- Security overview

- Step 1: Set up the first Elasticsearch node

- Step 2: Configure the first Elasticsearch node for connectivity

- Step 3: Start Elasticsearch

- Step 4: Set up a second Elasticsearch node

- Step 5: Set up additional Elasticsearch nodes

- Step 6: Consolidate Elasticsearch configuration

- Step 7: Install Kibana

- Step 8: Install Fleet Server

- Step 9: Install Elastic Agent

- Step 10: View your system data

- Next steps

To get started, you need the following:

- A set of virtual or physical hosts on which to install each stack component.

- On each host, a user account with

sudoprivileges, andcurlinstalled.

The examples in this guide use RPM Package Manager (RPM) packages to install the Elastic Stack 9.3.2 components on hosts running Red Hat Enterprise Linux or a compatible distribution such as Rocky Linux.

For the full list of supported operating systems and platforms, refer to the Elastic Support Matrix.

The packages needed by this tutorial are:

- https://artifacts.elastic.co/downloads/elasticsearch/elasticsearch-9.3.2-x86_64.rpm

- https://artifacts.elastic.co/downloads/kibana/kibana-9.3.2-x86_64.rpm

- https://artifacts.elastic.co/downloads/beats/elastic-agent/elastic-agent-9.3.2-linux-x86_64.tar.gz

For Elastic Agent and Fleet Server (both of which use the elastic-agent-9.3.2-linux-x86_64.tar.gz package) we recommend using TAR/ZIP packages over RPM/DEB system packages, since only the former support upgrading using Fleet.

Special considerations such as firewalls and proxy servers are not covered here.

For the basic ports and protocols required for the installation to work, refer to the following overview section.

Before starting, take a moment to familiarize yourself with the Elastic Stack components.

To learn more about the Elastic Stack and how each of these components are related, refer to An overview of the Elastic Stack.

This tutorial results in a secure-by-default environment, but not every connection uses the same certificate model. Before you begin, it helps to understand the security layout produced by these steps:

- Elasticsearch uses the automatic security setup during the initial installation flow. This process generates certificates and enables TLS for both the transport and HTTP layers.

- Kibana connects to Elasticsearch using the enrollment flow from the initial Elasticsearch setup.

- HTTPS for browser-to-Kibana communication is not configured in this tutorial, although it is strongly recommended for production environments. Kibana HTTPS is covered in Tutorial 2: Customize certificates for a self-managed Elastic Stack.

- Fleet Server is installed using the Quick Start flow, which uses a self-signed certificate for its HTTPS endpoint.

- Elastic Agent enrolls using that Quick Start flow, which requires the install command to include the

--insecureflag.

If you plan to use certificates signed by your organization's certificate authority or by a public CA, complete this tutorial until Kibana is installed (Step 7), and then continue with Tutorial 2: Customize certificates for a self-managed Elastic Stack before installing Fleet Server and Elastic Agent.

To begin, use RPM to install Elasticsearch on the first host. This initial Elasticsearch instance bootstraps a new cluster. You can find details about all of the following steps in the document Install Elasticsearch with RPM.

For installation steps for other supported methods, refer to Install Elasticsearch.

Log in to the host where you'd like to set up your first Elasticsearch node.

Create a working directory for the installation package:

mkdir elastic-install-filesChange into the new directory:

cd elastic-install-filesDownload the Elasticsearch RPM and checksum file from the Elastic Artifact Registry.

curl -L -O https://artifacts.elastic.co/downloads/elasticsearch/elasticsearch-9.3.2-x86_64.rpm curl -L -O https://artifacts.elastic.co/downloads/elasticsearch/elasticsearch-9.3.2-x86_64.rpm.sha512(Optional) Confirm the validity of the downloaded package by checking the SHA of the downloaded RPM against the published checksum:

sha512sum -c elasticsearch-9.3.2-x86_64.rpm.sha512The command should return:

elasticsearch-9.3.2-x86_64.rpm: OK.(Optional) Import the Elasticsearch GPG key used to verify the RPM package signature:

sudo rpm --import https://artifacts.elastic.co/GPG-KEY-elasticsearchRun the Elasticsearch install command:

sudo rpm --install elasticsearch-9.3.2-x86_64.rpmThe Elasticsearch install process enables certain security features by default, including the following:

- Authentication and authorization, including the built-in

elasticsuperuser account. - TLS certificates and keys for the transport and HTTP layers, stored in

/etc/elasticsearch/certsand configured automatically for use by Elasticsearch. - The transport interface is bound to the loopback interface (

localhost), preventing other nodes from joining the cluster, while the HTTP interface listens on all network interfaces (http.host: 0.0.0.0).

- Authentication and authorization, including the built-in

Copy the terminal output from the install command to a local file. In particular, you need the password for the built-in

elasticsuperuser account. The output also contains the commands to enable Elasticsearch to run as a service, which you use in the next step.Run the following two commands to enable Elasticsearch to run as a service using

systemd. This enables Elasticsearch to start automatically when the host system reboots. For more details, refer to Running Elasticsearch withsystemd.sudo systemctl daemon-reload sudo systemctl enable elasticsearch.service

Before moving ahead to configure additional Elasticsearch nodes, you need to update the Elasticsearch configuration on this first node so that other hosts are able to connect to it. This is done by updating the settings in the elasticsearch.yml file. For more details about Elasticsearch configuration and the most common settings, refer to Configure Elasticsearch and important settings configuration.

Obtain your host IP address (for example, by running

ifconfig). You need this value later.Open the Elasticsearch configuration file in a text editor, such as

vim:sudo vim /etc/elasticsearch/elasticsearch.ymlIn a multi-node Elasticsearch cluster, all of the Elasticsearch instances must have the same cluster name.

In the configuration file, uncomment the line

#cluster.name: my-applicationand give the Elasticsearch cluster any name that you'd like:cluster.name: elasticsearch-demo(Optional) Set a node name for this instance. If you don't set one, Elasticsearch uses its host name by default.

In the configuration file, uncomment the line

#node.name: node-1and give the Elasticsearch instance any name that you'd like:node.name: instance-1Configure networking settings.

Uncomment the line

#transport.host: 0.0.0.0to accept connections on all available network interfaces.By default, Elasticsearch listens for transport traffic on

localhost, which prevents other Elasticsearch instances from joining the cluster. To allow communication between nodes, you need to bind the transport interface to a non-loopback address.transport.host: 0.0.0.0- If you want Elasticsearch to listen only on a specific interface, set this to the host IP address instead.

Make sure

http.hostis configured.Elasticsearch should already be configured to listen on all network interfaces for HTTP traffic as part of the automatic setup.

Verify that this setting is present in your configuration file. If it is not, add it:

http.host: 0.0.0.0- If you want Elasticsearch to listen only on a specific interface, set this to the host IP address instead.

TipAs an alternative to setting

transport.hostandhttp.hostseparately, you can usenetwork.hostto configure both interfaces at once. For details, refer to the Elasticsearch networking settings documentation.

Save your changes and close the editor.

ImportantAfter you configure Elasticsearch to use non-loopback addresses, it enforces bootstrap checks. If Elasticsearch does not start successfully in the next step, review the Important system configuration documentation.

Now, it's time to start the Elasticsearch service on the first node:

sudo systemctl start elasticsearch.serviceIf you need to, you can stop the service by running

sudo systemctl stop elasticsearch.service.TipIf Elasticsearch does not start successfully, check the Elasticsearch log file at

/var/log/elasticsearch/<cluster-name>.logto learn more. For example, if your cluster name iselasticsearch-demo, the log file is/var/log/elasticsearch/elasticsearch-demo.log.Make sure that Elasticsearch is running properly.

sudo curl --cacert /etc/elasticsearch/certs/http_ca.crt -u elastic:$ELASTIC_PASSWORD https://localhost:9200In the command, replace

$ELASTIC_PASSWORDwith theelasticsuperuser password that you copied from the install command output.If all is well, the command returns a response like this:

{ "name" : "Cp9oae6", "cluster_name" : "elasticsearch-demo", "cluster_uuid" : "AT69_C_DTp-1qgIJlatQqA", "version" : { "number" : "{version_qualified}", "build_type" : "{build_type}", "build_hash" : "f27399d", "build_flavor" : "default", "build_date" : "2016-03-30T09:51:41.449Z", "build_snapshot" : false, "lucene_version" : "{lucene_version}", "minimum_wire_compatibility_version" : "1.2.3", "minimum_index_compatibility_version" : "1.2.3" }, "tagline" : "You Know, for Search" }Finally, check the status of Elasticsearch:

sudo systemctl status elasticsearchAs with the previous

curlcommand, the output should confirm that Elasticsearch started successfully. Typeqto exit from thestatuscommand results.

To set up a second Elasticsearch node, you start by installing the Elasticsearch RPM package, but then follow a different configuration flow so that the node joins the existing cluster instead of creating a new one. You can find additional details in Reconfigure a node to join an existing cluster.

Log in to the host where you'd like to set up your second Elasticsearch instance.

Create a working directory for the installation package:

mkdir elastic-install-filesChange into the new directory:

cd elastic-install-filesDownload the Elasticsearch RPM and checksum file:

curl -L -O https://artifacts.elastic.co/downloads/elasticsearch/elasticsearch-9.3.2-x86_64.rpm curl -L -O https://artifacts.elastic.co/downloads/elasticsearch/elasticsearch-9.3.2-x86_64.rpm.sha512Check the SHA of the downloaded RPM:

sha512sum -c elasticsearch-9.3.2-x86_64.rpm.sha512(Optional) Import the Elasticsearch GPG key used to verify the RPM package signature:

sudo rpm --import https://artifacts.elastic.co/GPG-KEY-elasticsearchRun the Elasticsearch install command:

sudo rpm --install elasticsearch-9.3.2-x86_64.rpmUnlike the setup for the first Elasticsearch node, in this case you don't need to copy the output of the install command. By default, the installation prepares the node as a single-node cluster, but in a later step the

elasticsearch-reconfigure-nodetool updates that configuration so the node can join your existing cluster.Enable Elasticsearch to run as a service:

sudo systemctl daemon-reload sudo systemctl enable elasticsearch.serviceImportantDon't start the Elasticsearch service yet. Complete the remaining configuration steps first.

To enable the new Elasticsearch node to connect to the cluster, create an enrollment token from any node that is already part of the cluster.

Return to your terminal shell on the first Elasticsearch node and generate a node enrollment token:

sudo /usr/share/elasticsearch/bin/elasticsearch-create-enrollment-token -s nodeCopy the generated enrollment token from the command output.

TipAn enrollment token has a lifespan of 30 minutes. In case the

elasticsearch-reconfigure-nodecommand returns anInvalid enrollment tokenerror, try generating a new token.Be sure not to confuse an Elasticsearch enrollment token (for enrolling Elasticsearch nodes in an existing cluster) with a Kibana enrollment token (to enroll your Kibana instance with Elasticsearch, as described in the next section). These two tokens are not interchangeable.

In the terminal shell for your second Elasticsearch node, pass the enrollment token as a parameter to the

elasticsearch-reconfigure-nodetool:sudo /usr/share/elasticsearch/bin/elasticsearch-reconfigure-node --enrollment-token <enrollment-token>- Replace

<enrollment-token>with the token that you copied in the previous step.

NoteIf

elasticsearch-reconfigure-nodefails and indicates that the node has already been started or initialized, refer to Cases when security auto-configuration is skipped for a list of possible causes.This can happen, for example, if Elasticsearch was started previously, which creates the

datadirectory and prevents the auto-configuration process from running again.- Replace

Answer the

Do you want to continue with the reconfiguration processprompt withyes(y). The new Elasticsearch node is reconfigured.Obtain your host IP address (for example, by running

ifconfig). You need this value later.Open the new Elasticsearch instance configuration file in a text editor:

sudo vim /etc/elasticsearch/elasticsearch.ymlBecause of running the

elasticsearch-reconfigure-nodetool, certain settings have been updated. For example:- The

transport.host: 0.0.0.0andhttp.host: 0.0.0.0settings are already uncommented. - The

discovery_seed.hostssetting has the host IP address of the first Elasticsearch node. As you add each new Elasticsearch node to the cluster, thediscovery_seed.hostssetting contains an array of the IP addresses and port numbers to connect to each Elasticsearch node that was previously added to the cluster.

- The

In the configuration file, uncomment the line

#cluster.name: my-applicationand set it to match the name you specified on the first Elasticsearch node:cluster.name: elasticsearch-demo(Optional) Set a node name for this instance. If you don't set one, Elasticsearch uses its host name by default.

In the configuration file, uncomment the line

#node.name: node-1and give the Elasticsearch instance any name that you'd like:node.name: instance-2(Optional) Review networking settings.

After running

elasticsearch-reconfigure-node, Elasticsearch is already configured to use non-loopback addresses for transport and HTTP traffic, so no changes are usually required. You can verify this in your configuration file:transport.host: 0.0.0.0 http.host: 0.0.0.0NoteIf you make changes to the networking settings, ensure that the networking configuration is consistent across all nodes. For example, use the same approach to binding (specific IP addresses or

0.0.0.0) and the same settings (transport.host,http.host, ornetwork.host) across all nodes. For details, refer to the Elasticsearch networking settings documentation.Save your changes and close the editor.

Start Elasticsearch on the second node:

sudo systemctl start elasticsearch.serviceTipIf Elasticsearch does not start successfully, check the Elasticsearch log file at

/var/log/elasticsearch/<cluster-name>.logto learn more. For example, if your cluster name iselasticsearch-demo, the log file is/var/log/elasticsearch/elasticsearch-demo.log.(Optional) To monitor the second Elasticsearch node as it starts up and joins the cluster, open a new terminal into the second node and

tailthe Elasticsearch log file:sudo tail -f /var/log/elasticsearch/elasticsearch-demo.log- If needed, replace

elasticsearch-demowith your cluster name.

Notice in the log file some helpful diagnostics, such as:

Security is enabledProfiling is enabledusing discovery type [multi-node]initializedstarting...

After a minute or so, the log should show a message like:

[<hostname2>] master node changed {previous [], current [<hostname1>...]}where

hostname1is your first Elasticsearch instance node, andhostname2is your second Elasticsearch instance node.The message indicates that the second Elasticsearch node has successfully contacted the initial Elasticsearch node and joined the cluster.

- If needed, replace

As a final check, verify that the new node is reachable and responding, and that it appears in the cluster. In the following commands, replace

$ELASTIC_PASSWORDwith the sameelasticsuperuser password that you used on the first Elasticsearch node.To confirm that Elasticsearch is running properly on the new node, run:

sudo curl --cacert /etc/elasticsearch/certs/http_ca.crt -u elastic:$ELASTIC_PASSWORD https://localhost:9200- For a more complete check, replace

localhostwith the IP address of the new node to verify that it is reachable over the network.

Response example:

{ "name" : "Cp9oae6", "cluster_name" : "elasticsearch-demo", "cluster_uuid" : "AT69_C_DTp-1qgIJlatQqA", "version" : { "number" : "{version_qualified}", "build_type" : "{build_type}", "build_hash" : "f27399d", "build_flavor" : "default", "build_date" : "2016-03-30T09:51:41.449Z", "build_snapshot" : false, "lucene_version" : "{lucene_version}", "minimum_wire_compatibility_version" : "1.2.3", "minimum_index_compatibility_version" : "1.2.3" }, "tagline" : "You Know, for Search" }To confirm that the node has joined the cluster, run the following command on any Elasticsearch node:

sudo curl --cacert /etc/elasticsearch/certs/http_ca.crt -u elastic:$ELASTIC_PASSWORD https://localhost:9200/_cat/nodes?v- You can replace

localhostwith the IP address of any of the nodes.

The output should include the new node together with the existing node or nodes in the cluster, for example:

203.0.113.25 46 97 18 0.21 0.23 0.10 cdfhilmrstw - instance-2 203.0.113.21 31 96 1 0.04 0.03 0.01 cdfhilmrstw * instance-1- For a more complete check, replace

To set up additional Elasticsearch nodes, repeat the process from Step 4: Set up a second Elasticsearch node for each new node that you add to the cluster.

As a recommended best practice, create a new enrollment token for each new node that you add.

Once you have added all your Elasticsearch nodes to the cluster, you need to consolidate the elasticsearch.yml configuration on all nodes so that they can restart and rejoin the cluster cleanly in the future.

On each Elasticsearch node, open

/etc/elasticsearch/elasticsearch.ymlin a text editor.Comment out or remove the

cluster.initial_master_nodessetting, if it is still present. This setting is only needed while bootstrapping a new cluster.Update

discovery.seed_hostsso it includes the IP address and transport port of each master-eligible Elasticsearch node in the cluster.On the first node in the cluster, you need to add the

discovery.seed_hostssetting manually. For example, if your cluster has three nodes:discovery.seed_hosts: - 203.0.113.84:9300 - 203.0.113.132:9300 - 203.0.113.156:9300NoteIf you are not configuring node roles, then all your Elasticsearch nodes should appear in the

discovery.seed_hostslist of all the nodes.Save your changes on each node.

Optionally, restart the Elasticsearch service on each node to validate the updated configuration.

If you do not perform these steps, one or more nodes can fail the discovery configuration bootstrap check when restarted.

For more information, refer to Update the config files and Discovery and cluster formation.

As with Elasticsearch, you can use RPM to install Kibana on another host. You can find details about all of the following steps in the document Install Kibana with RPM.

For installation steps using other supported methods, refer to Install Kibana.

Log in to the host where you'd like to install Kibana and create a working directory for the installation package:

mkdir kibana-install-filesChange into the new directory:

cd kibana-install-filesDownload the Kibana RPM and checksum file from the Elastic website.

curl -L -O https://artifacts.elastic.co/downloads/kibana/kibana-9.3.2-x86_64.rpm curl -L -O https://artifacts.elastic.co/downloads/kibana/kibana-9.3.2-x86_64.rpm.sha512Confirm the validity of the downloaded package by checking the SHA of the downloaded RPM against the published checksum:

sha512sum -c kibana-9.3.2-x86_64.rpm.sha512The command should return:

kibana-9.3.2-x86_64.rpm: OK(Optional) Import the Elasticsearch GPG key used to verify the RPM package signature:

sudo rpm --import https://artifacts.elastic.co/GPG-KEY-elasticsearch- The GPG key used to sign Kibana and Elasticsearch RPM packages is the same.

Run the Kibana install command:

sudo rpm --install kibana-9.3.2-x86_64.rpmRun the following two commands to enable Kibana to run as a service using

systemd, enabling Kibana to start automatically when the host system reboots.sudo systemctl daemon-reload sudo systemctl enable kibana.service

Before starting the Kibana service, update kibana.yml with the following settings:

- The network binding address so that Kibana listens on its host IP address.

- A saved objects encryption key required for features such as Fleet.

For more details about Kibana configuration, refer to the Kibana configuration.

Obtain the host IP address for your Kibana host (for example, by running

ifconfig) and make note of it.Generate a saved objects encryption key on the Kibana host:

sudo /usr/share/kibana/bin/kibana-encryption-keys generateThe command output includes several encryption-related settings. For this tutorial, copy only the value of

xpack.encryptedSavedObjects.encryptionKey, which is required for Fleet features. You can ignore the other generated keys for now.Open the Kibana configuration file for editing:

sudo vim /etc/kibana/kibana.ymlUncomment the line

#server.host: localhostand replace the default address with the host IP address that you copied. For example:server.host: 203.0.113.28- If you want Kibana to listen on all available network interfaces, you can use

0.0.0.0instead.

- If you want Kibana to listen on all available network interfaces, you can use

Add

xpack.encryptedSavedObjects.encryptionKeysetting with the value returned by thekibana-encryption-keys generatecommand:xpack.encryptedSavedObjects.encryptionKey: "min-32-byte-long-strong-encryption-key"- Replace the value with the actual key.

ImportantIn production environments, consider storing this setting in the Kibana keystore instead of

kibana.yml. For guidance, refer to Kibana secure settings.Rotate encryption keys only as part of a planned process. This helps ensure existing encrypted saved objects remain readable. For guidance on rotation, refer to Encryption key rotation.

Save your changes and close the editor.

Kibana is now ready to start and enroll with the Elasticsearch cluster.

In this section, you start Kibana for the first time and complete enrollment with your Elasticsearch cluster. This initial startup provides the verification code and enrollment prompt, and it finalizes Kibana setup by automatically applying the required connection settings.

Start the Kibana service:

sudo systemctl start kibana.serviceIf you need to, you can stop the service by running

sudo systemctl stop kibana.service.Run the

statuscommand to get details about the Kibana service.sudo systemctl status kibanaIn the

statuscommand output, a URL is shown with:- a host address to access Kibana

- a six digit verification code

For example:

Kibana has not been configured. Go to http://203.0.113.28:5601/?code=<code> to get started.- If the URL shows

0.0.0.0, use the host IP address when connecting to Kibana in the next step.

Make note of the verification code.

Open a web browser to the external IP address of the Kibana host machine, for example:

http://<kibana-host-address>:5601.It can take a minute or two for Kibana to start up, so refresh the page if you don't see a prompt right away.

When Kibana starts, you're prompted for an enrollment token. You must generate this token in Elasticsearch:

Return to the terminal session on the first Elasticsearch node.

Run the

elasticsearch-create-enrollment-tokencommand with the-s kibanaoption to generate a Kibana enrollment token:sudo /usr/share/elasticsearch/bin/elasticsearch-create-enrollment-token -s kibanaCopy the generated enrollment token from the command output and paste it into the enrollment prompt in the browser.

Click Configure Elastic.

If you're prompted to provide a verification code, copy and paste in the six digit code that was returned by the

statuscommand. Then, wait for the setup to complete.When the Welcome to Elastic page appears, sign in with the

elasticsuperuser account and the password that was generated when you installed the first Elasticsearch node.Click Log in.

Kibana is now fully set up and communicating with your Elasticsearch cluster.

This tutorial already uses the Elasticsearch automatic security setup, which configures security for Elasticsearch by default, including TLS for both the transport and HTTP layers.

If you plan to use certificates signed by your organization's certificate authority or by a public CA instead, stop here after installing Kibana and continue with Tutorial 2: Customize certificates for a self-managed Elastic Stack. That tutorial is the right place to replace or adjust the default certificate configuration before installing Fleet Server and Elastic Agent.

Following that path avoids installing Fleet Server and Elastic Agent with the certificate setup from this tutorial and then needing to reinstall the components after changing the security configuration.

Note also that the automatic setup used here does not configure HTTPS for browser access to Kibana, which is highly recommended for production environments.

Now that Kibana is up and running, you can install Fleet Server. Fleet Server connects Elastic Agent instances to Fleet and serves as a control plane for updating agent policies and collecting agent status information.

This tutorial uses the Quick Start installation flow, which generates a self-signed certificate for the Fleet Server by default. For more details about Quick Start and Advanced setup options, refer to Deploy on-premises and self-managed Fleet Server.

If you want to use custom SSL/TLS certificates, follow the Tutorial 2: Customize certificates for a self-managed Elastic Stack instead of continuing with these steps.

Before proceeding, confirm the following prerequisites:

- If you're not using the built-in

elasticsuperuser, ensure your Kibana user has All privileges for Fleet and Integrations. - Elastic Agent hosts have direct network connectivity to both the Fleet Server and the Elasticsearch cluster.

- The Kibana host can connect to

https://epr.elastic.coon port443to download integration packages.

Log in to the host where you'd like to set up Fleet Server.

Create a working directory for the installation package:

mkdir fleet-install-filesChange into the new directory:

cd fleet-install-filesObtain the host IP address for your Fleet Server host (for example, by running

ifconfig). You need this value later.Back to your web browser, open the Kibana menu and go to Management -> Fleet. Fleet opens with a message that you need to add a Fleet Server.

Click Add Fleet Server. The Add a Fleet Server flyout opens.

In the flyout, select the Quick Start tab.

Specify a name for your Fleet Server host, for example

Fleet Server.Specify the host URL that Elastic Agents need to use to reach the Fleet Server, for example:

https://203.0.113.203:8220. This is the Fleet Server host IP address that you copied earlier.Be sure to include the port number. Port

8220is the default used by Fleet Server in an on-premises environment. Refer to Default port assignments in the on-premises Fleet Server install documentation for a list of port assignments.Click Generate Fleet Server policy. A policy is created that contains all of the configuration settings for the Fleet Server instance.

On the Install Fleet Server to a centralized host step, for this example we select the Linux tab. Be sure to select the tab that matches both your operating system and architecture (for example,

aarch64orx64). TAR/ZIP packages are recommended over RPM/DEB system packages, since only the former support upgrading Fleet Server using Fleet.Copy the generated commands and then run them one-by-one in the terminal on your Fleet Server host. These commands do the following:

- Download the Fleet Server package from the Elastic Artifact Registry

- Unpack the package archive

- Change into the directory containing the install binaries

- Install Fleet Server.

If you'd like to learn about the install command options, refer to

elastic-agent installin the Elastic Agent command reference.At the prompt, enter

Yto install Elastic Agent and run it as a service. Wait for the installation to complete.In the Kibana Add a Fleet Server flyout, wait for confirmation that Fleet Server has connected.

For now, ignore the Continue enrolling Elastic Agent option and close the flyout.

Fleet Server is now fully set up.

Next, install Elastic Agent on another host and use the System integration to monitor system logs and metrics.

You can install only one Elastic Agent per host.

Log in to the host where you'd like to set up Elastic Agent.

Create a working directory for the installation package:

mkdir agent-install-filesChange into the new directory:

cd agent-install-filesOpen Kibana and go to Management -> Fleet.

On the Agents tab, you should see your new Fleet Server policy running with a healthy status.

Open the Settings tab and review the Fleet Server hosts and Outputs URLs. Ensure the URLs and IP addresses are valid for reaching Fleet Server and the Elasticsearch cluster, and that they use the HTTPS protocol.

Reopen the Agents tab and select Add agent. The Add agent flyout opens.

In the flyout, choose a policy name, for example

Demo Agent Policy.Leave Collect system logs and metrics enabled. This adds the System integration to the Elastic Agent policy.

Click Create policy.

For the Enroll in Fleet? step, leave Enroll in Fleet selected.

On the Install Elastic Agent on your host step, for this example we select the Linux tab. Be sure to select the tab that matches both your operating system and architecture (for example,

aarch64orx64).As with Fleet Server, note that TAR/ZIP packages are recommended over RPM/DEB system packages, since only the former support upgrading Elastic Agent using Fleet.

Copy the generated commands to a text editor. Do not run them yet, because you need to modify one of the commands in the next step.

In the

sudo ./elastic-agent installcommand, make two changes:- For the

--urlparameter, check that the port number is set to8220(used for on-premises Fleet Server). - Append an

--insecureflag at the end.

The

--insecureflag is required in this tutorial to allow connections to a Fleet Server endpoint that uses a self-signed certificate. For related guidance, refer to Install Fleet-managed Elastic Agents.TipIf you want to set up secure communications using custom SSL certificates, refer to Tutorial 2: Customize certificates for a self-managed Elastic Stack.

The result should be like the following:

sudo ./elastic-agent install --url=https://203.0.113.203:8220 --enrollment-token=VWCobFhKd0JuUnppVYQxX0VKV5E6UmU3BGk0ck9RM2HzbWEmcS4Bc1YUUM== --insecure- For the

Run the commands one-by-one in the terminal on your Elastic Agent host. The commands do the following:

- Download the Elastic Agent package from the Elastic Artifact Registry.

- Unpack the package archive.

- Change into the directory containing the install binaries.

- Install Elastic Agent.

At the prompt, enter

Yto install Elastic Agent and run it as a service. Wait for the installation to complete:Elastic Agent has been successfully installed.In the Kibana Add agent flyout, wait for confirmation that Elastic Agent has connected.

Close the flyout.

Your new Elastic Agent is now installed and enrolled with Fleet Server.

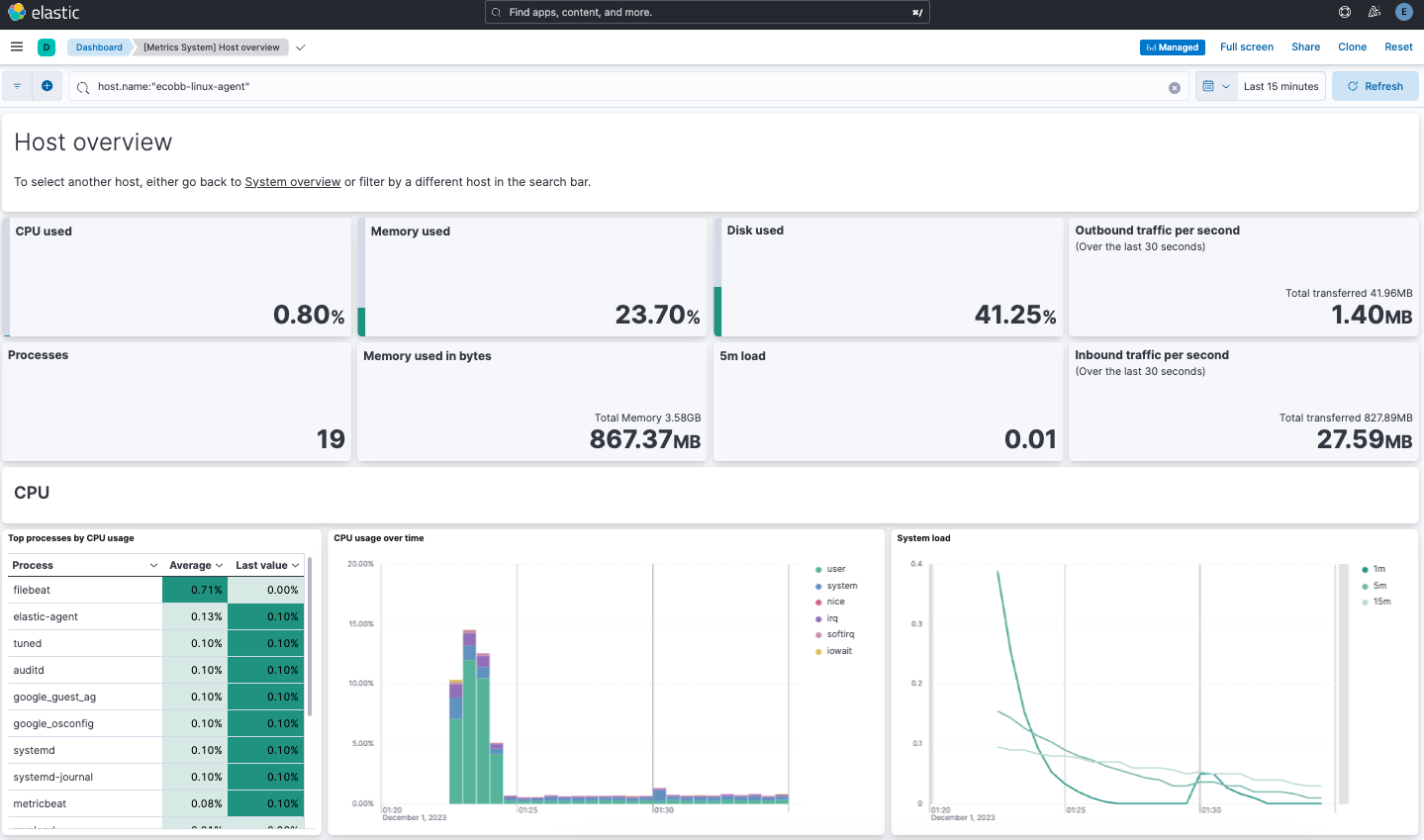

Now that all components are installed, you can view your data in multiple ways. Elastic Observability provides solution views for exploring host activity, and each integration can also provide dedicated dashboards and visualizations. In this tutorial, you'll first check the host view in Observability, then open example logs and metrics dashboards from the System integration.

The System integration assets (including dashboards) are installed automatically when you add the System integration to the Elastic Agent policy.

View your host data in Observability:

- Open the Kibana menu and go to Observability -> Infrastructure -> Hosts.

- Confirm that your host appears and is reporting data.

View your system log data:

- Open the Kibana menu and go to Analytics -> Dashboard.

- In the query field, search for

Logs System. - Select the

[Logs System] Syslog dashboardlink. The Kibana Dashboard opens with visualizations of Syslog events, hostnames and processes, and more.

View your system metrics data:

- Open the Kibana menu and return to Analytics -> Dashboard.

- In the query field, search for

Metrics System. - Select the

[Metrics System] Host overviewlink. The Kibana Dashboard opens with visualizations of host metrics including CPU usage, memory usage, running processes, and others.

You've successfully set up a three-node Elasticsearch cluster, with Kibana, Fleet Server, and Elastic Agent.

Now that you've successfully configured an on-premises Elastic Stack, you can learn how to customize the certificate configuration for the Elastic Stack in a production environment using trusted CA-signed certificates. Refer to Tutorial 2: Customize certificates for a self-managed Elastic Stack to learn more.

You can also start using your newly set up Elastic Stack right away:

- Do you have data ready to ingest? Learn how to bring your data to Elastic.

- Use Elastic Observability to unify your logs, infrastructure metrics, uptime, and application performance data.

- Want to protect your endpoints from security threats? Try Elastic Security. Adding endpoint protection is just another integration that you add to the agent policy!