External inference

You can use your own API keys to integrate with third-party model providers like Amazon Bedrock, Anthropic, Azure AI Studio, Cohere, Google AI, Mistral, OpenAI, Hugging Face, and more.

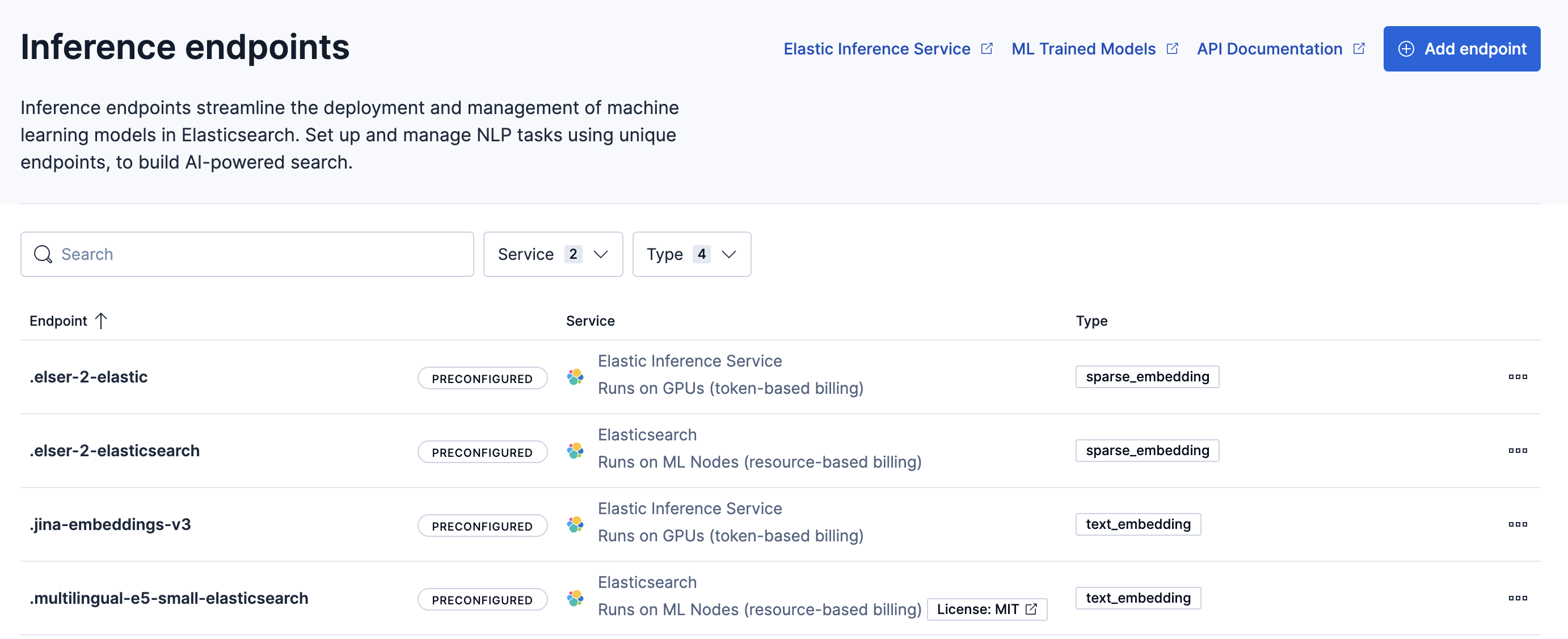

Kibana provides interfaces for managing external inference models and endpoints.

Go to the External inference page by using the navigation menu or the global search field.

To access External inference, you need the Inference Endpoints: all and Advanced Settings: read Kibana privileges.

Available actions include:

- Add endpoints

- View endpoint details

- Copy the inference endpoint ID

- Delete endpoints

Alternatively, you can use inference APIs.

Select the Add endpoint button.

Select a service from the drop down menu.

Provide the required configuration details.

For service-specific information, refer to the relevant API documentation. For example, create a JinaAI inference endpoint.

Select Save to create the endpoint.