Stack monitoring alerts

ECE ECK Elastic Cloud Hosted Self Managed

The Elastic Stack monitoring features provide Alerting rules out-of-the box to notify you of potential issues in the Elastic Stack. These rules are preconfigured based on the best practices recommended by Elastic. However, you can tailor them to meet your specific needs.

The default Watcher based "cluster alerts" for Stack Monitoring have been recreated as rules in Kibana alerting features. For this reason, the existing Watcher email action monitoring.cluster_alerts.email_notifications.email_address no longer works. The default action for all Stack Monitoring rules is to write to Kibana logs and display a notification in the UI.

When you open Stack Monitoring for the first time, you will be asked to allow Kibana to create the default set of rules. They are initially configured to detect and notify on various conditions across your monitored clusters. You can view notifications for Cluster health, Resource utilization, and Errors and exceptions for Elasticsearch in real time.

If you denied creation of the default rules initially, or to recreate any deleted rules, then you can trigger Kibana to create the rules by going to Alerts and rules > Create default rules.

To receive external notifications for these alerts, you need to configure a connector and modify the relevant rule to use the connector. If you're using Elastic Cloud Hosted, then you can use the default Elastic-Cloud-SMTP email connector or configure your own.

Some action types are subscription features, while others are free. For a comparison of the Elastic subscription levels, see the alerting section of the Subscriptions page.

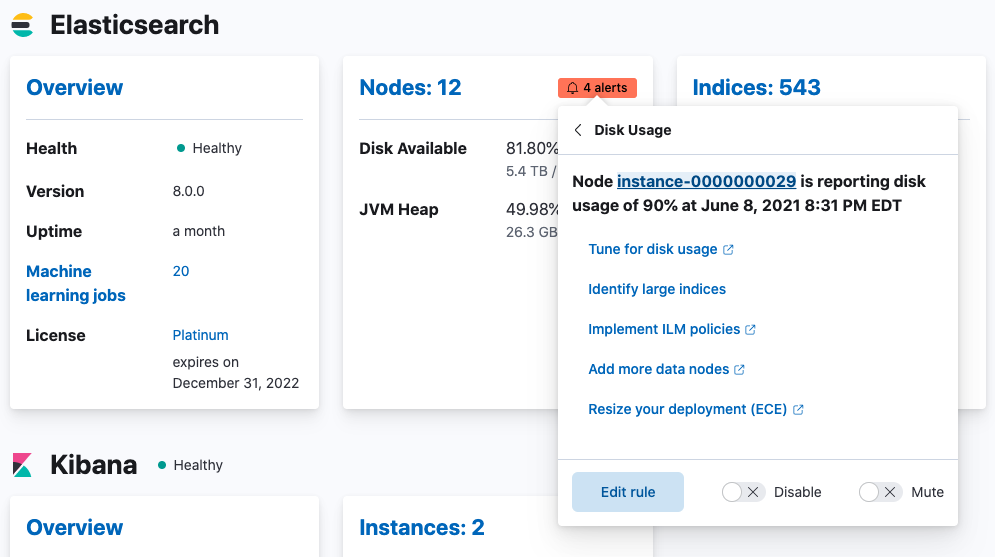

To review and modify existing Stack Monitoring rules, click Enter setup mode on the Cluster overview page. Cards with alerts configured are annotated with an indicator.

Alternatively, to manage all rules, including create and delete functionality go to Stack Management > Rules.

- On any card showing available alerts, select the alerts indicator. Use the menu to select the type of alert for which you’d like to be notified.

- In the Edit rule pane, set how often to check for the condition and how often to send notifications.

- In the Actions section, select the connector that you'd like to use for notifications.

- Configure the connector message contents and select Save.

The following rules are preconfigured for stack monitoring.

CPU usage threshold

Disk usage threshold

JVM memory threshold

Missing monitoring data

Thread pool rejections (search/write)

This rule checks for Elasticsearch nodes that experience thread pool rejections.

By default, the condition is set at 300 or more over the last 5 minutes. The default rule checks on a schedule time of 1 minute with a re-notify interval of 1 day. Thresholds can be set independently for search and write type rejections.

CCR read exceptions

Large shard size

This rule checks for a large average shard size (across associated primaries) on any of the specified data views in an Elasticsearch cluster.

The condition is met if an index’s average shard size is 55gb or higher in the last 5 minutes. The default rule matches the pattern of -.* by running checks on a schedule time of 1 minute with a re-notify interval of 12 hours.

Cluster alerting

These rules check the current status of your Elastic Stack. You can drill down into the metrics to view more information about your cluster and specific nodes, instances, and indices.

An action is triggered if any of the following conditions are met within the last minute:

Elasticsearch cluster health status is yellow (missing at least one replica) or red (missing at least one primary).

Elasticsearch version mismatch. You have Elasticsearch nodes with different versions in the same cluster.

Kibana version mismatch. You have Kibana instances with different versions running against the same Elasticsearch cluster.

Logstash version mismatch. You have Logstash nodes with different versions reporting stats to the same monitoring cluster.

Elasticsearch nodes changed. You have Elasticsearch nodes that were recently added or removed.

Elasticsearch license expiration. The cluster’s license is about to expire.

If you do not preserve the data directory when upgrading a Kibana or Logstash node, the instance is assigned a new persistent UUID and shows up as a new instance.

Subscription license expiration. When the expiration date approaches, you will get notifications with a severity level relative to how soon the expiration date is:

- 60 days: Informational alert

- 30 days: Low-level alert

- 15 days: Medium-level alert

- 7 days: Severe-level alert

The 60-day and 30-day thresholds are skipped for Trial licenses, which are only valid for 30 days.

For the Elasticsearch nodes changed alert, if you have only one master node in your cluster, during the master node vacate no notification will be sent. Kibana needs to communicate with the master node in order to send a notification. One way to avoid this is by shipping your deployment metrics to a dedicated monitoring cluster.